Researchers have invented a new, hyper-realistic form of autotune using AI

The new technology was created by researchers at Johns Hopkins University

A new type of autotune that pitch-corrects recorded vocals with more accuracy and transparency than existing auto-tune plugins and tools has been designed by research students out of Johns Hopkins University.

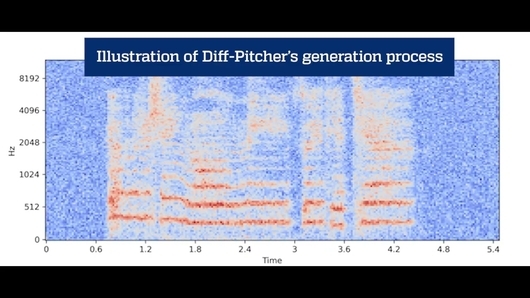

The project — called DiffPitcher — uses AI and deep learning to understand what singing in key might sound like based on training data, and can then synthesise existing recordings more naturally based on that.

Speaking about the project, Jiarui Hai, a student who worked as part of the team behind it, said: “Diff-Pitcher is a generative deep neural network that takes pitch correction technology to a new level. Its precision and control can not only help musical artists and producers but also open new possibilities in areas such as voice rehabilitation and assistive technologies.

“[The results sound] really natural,” Hai continued, “and unlike in older ways of fixing pitch, we can still regulate how high or low the voice goes.”

You can hear the new technology in action in the video below.

Last year, a survey showed that 50% of artists wouldn’t admit to using AI in their music-making process.